At our recent HC2 Summer School we had a talk from Atau Tanaka on Embodied Sonic Interaction and how we might explore “possibilities of actions in the technologically augmented everyday”. An important part of this idea is the psychological basis for affordance and the potential for musical and sonic affordance. In another talk Joe Paradiso suggested that when thinking about human computer interaction we should be wary of moving away from interfaces that are based on our hands. You only have to look at the cortical homunculus to realise that we have a strong bias for manual interaction with the world.

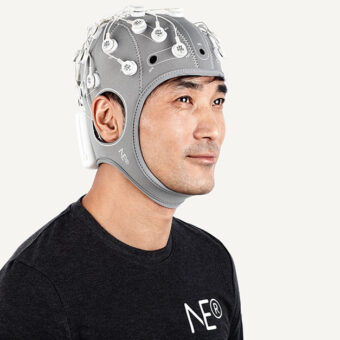

What struck me most about this is what it might mean for Brain Computer Interfaces as a means to interact with the world. On the one hand (!) if you are using a BCI for control, even if it is a left hand-right hand Motor Imagery BCI (where we simply imagine moving our hands to generate signals), we are not in any real sense taking advantage of the motor cortex bias towards manual tasks. On the other you also lose most forms of sensory feedback when using a BCI. You no longer have haptic, proprioceptive, cutaneous cues when moving an object for example. These subconscious cues help us make continuous predictions and adjust our movements based on the feedback received. This is a very important mechanism for us as agents in a physical world and disturbances of this mechanism can be extremely serious and have even been linked to schizophrenia.

What does this mean for affordance and BCI design? Should we concentrate on prosthetic solutions that attempt to provide users with something as close to a hand as possible so that we take advantage of our innate manual bias? Will these ever work well if we do not also provide feedback to the user in a modality similar to haptic or proprioceptive?

In BCI we often talk about the affordances of the brain itself but this generally refers to visual modalities such as P300 or SSVEP where we take advantage of the brains response to visual cues, this is far removed form the physical world in which we live.

As many BCIs for control and communication are limited to control of software via a graphical interface affordance is perhaps not crucial.

Regarding feedback, Jonathan Wolpaw, one of the leaders in the field of non-invasive BCI, often refers to this issue and noted in his recent book, Brain-Computer Interfaces, that high speed interaction will likely need multi-modal feedback of the form mentioned above.

Generally I think our essential embodiment cannot be ignored as our BCI systems become more advanced and user expectations increase.