The term affective computing is commonly used to describe any computing that involves emotions, considering the concepts of emotions and affects as synonyms. This involvement can relate to detection, generation and/or mimicking of emotions. Whether those emotions are fake or real, human or synthetic isn’t discriminated. If you have a computing process dealing with emotions in any way, you’ve got an affective computing system.

Many different knowledge areas and technologies are commonly used in affective computing systems. One of the disciplines on which emotions typically have a strong impact is human-computer interaction (HCI from now on). Emotions play a crucial role in human interactions and, therefore, the ability to deal with emotions provided by affective computing offers the area of HCI enormous possibilities to grow and improve. An interesting overview of affective computing was written by Rosalind W. Picard in 1999.

Tools for affective computing systems

Technically speaking, many different tools and technologies are commonly used in affective computing systems. Not only many tools are useful for emotion detection and processing, but also many technologies can be used in conjunction with affective computing techniques to mutually improve the effects on the performance.

The list of tools and approaches used to detect emotions is growing everyday, but here you have the most commonly used:

- Visual processing techniques for emotion recognition. These include face recognition, by which emotions are detected from the expressions shown in the subject’s face. Paul Ekman’s work in this area has become well-known to the mainstream thanks to the popular TV show “Lie to me”. Body gesture recognition is also a powerful tool used to detect emotionsand has increased its spread thanks to advances in body language recognition, as well as to the development of commercial gaming platforms that detect body poses.

- Physiological signal processing techniques for emotion recognition. These techniques include many different approaches to detect emotions not purposely expressed by a human through the processing of physiological indicators. Such indicators can be:

- Brain activity, like electroencephalopathy (EEG; check out our post on Emotion Detection from EEG: https://blog.neuroelectrics.com/blog/bid/231160/emotion-recognition-using-eeg) or Functional magnetic resonance imaging (fMRI)

- Heart activity, like heart rate (HR) or heart rate variability (HRV) are also common used for emotion detection.

- Galvanic skin response is commonly used in emotion detection and is commonly used to detect levels of emotional activity in humans.

- Speech has been proven to be very useful for the detection of several emotional states such are frustration, anxiety, anger or happiness.

Affective computing applications

Affective computing is also often applied together with immersive technologies like virtual reality or augmented reality. This combination intends to boost both the effects of affective computing outcomes like emotion recognition, and the effects of immerse technologies like engagement. The Beaming Project brings affective computing and immersive technologies together to set telepresence in action.

When considering other specific applications, advances in affective computing have lead to the development of new techniques and applications in many different areas:

- Medical applications: The detection of emotions by computers is very useful for medical applications, not only for the treatment of emotion-related problems, but also to ease the incorporation of emotional indicators as a diagnostic tool.

- Content selection and data mining: The ability to detect the positiveness or satisfaction of a subject has lead to the improvement of recommendation systems and to develop more satisfactory content browsing systems.

- E-learning: Affective computing applications of emotion recognition can be very useful while overcoming the problems related to e-learning derived by the absence of human company.

- Interface improvement: As mentioned, Human Computer Interaction is an area that highly benefits from improvements in affective computing. Interface improvement can be designed to better respond to the emotional state of the user. Apple’s Siri, for example, detects the user’s tone of voice and uses it to discriminate among different possible resulting actions. Adaptive interfaces which deal with the stress levels of the users, as well as interfaces adapting to the mood of the user to ease his response to negative outcomes, are also designed.

- Neuromarketing: The ability to detect the emotional response of a human without the necessity of asking him or her has contributed to the development of neuromarketing, a discipline of marketing research that guides the design of products and marketing strategies according to the un-biased response of the subject. Check out our post https://blog.neuroelectrics.com/blog/bid/234788/Is-Neuromarketing-about-to-Explode to understand the impact of neuroscience and affective computing on marketing. You can also follow the Neuroscience Marketing blog for a wide and continuous look at neuroscience, affective computing and marketing.

As a wrap up, a very interesting research group to follow up on affective computing is lead by Rosalind W. Picard at MIT.

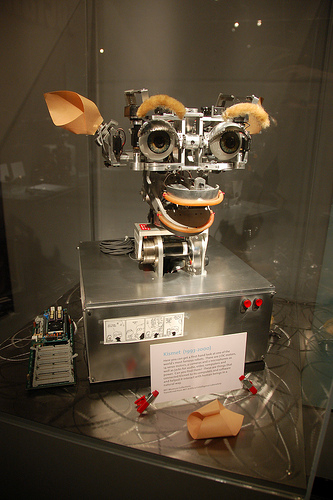

photo credit: iMorpheus via photopin cc

photo credit: Chris Devers via photopin ccDeveloping your BCI application

with Enobio is easier than ever!