How many times have you wondered how people feel while using your product or service? How many times have you wished to have this answer immediately without having them fill out tiresome questionnaires? If you have found yourself in such a situation, then our ExperienceLab services are definitely for you! Emotions are an intrinsic part of User Experience, and as such, they are equally necessary to measure. The most common way of measuring a user’s emotions is through asking them directly. However, most humans are not skilled at articulating how they feel, and even if they are, their answers are often prone to their general emotional and physical state, e.g., fatigue or personal beliefs and biases. With our system, we go beyond these limitations, as we directly measure emotions from the user’s brain signals, which are more objective and difficult to manipulate.

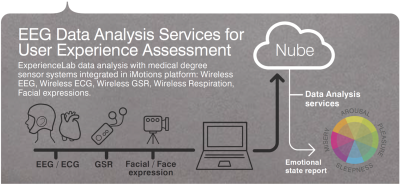

ExperienceLab is our platform for data analyses with integrated wireless medical grade systems that include electroencephalography (EEG), electrocardiography (ECG), galvanic skin response, respiration and facial expressions. We offer any customizable combination of biophysical and neurophysiological sensors integrated with your own sensors and synchronized via iMotions recording software, which can provide further insight into the User Experience. We use advanced signal processing and machine learning methodologies to objectively characterize emotional responses, providing offline or real-time analysis services.

The system outputs a unique emotion measure through multisensory data fusion. Emotion characterization is carried out through the well-established psychological model of the valence-arousal circumplex. Valence refers to “pleasantness” and ranges from negative to positive, whereas arousal refers to excitation and ranges from inactive to active. For example, low arousal and low valence values refer to sadness; high arousal and high valence values refer to excitement/joy; low arousal and high valence values refer to serenity; and high arousal and high valence values refer to anger. And we provide tailored result visualizations for further use (internal, marketing, analysis, decision making). We help you to find suitable protocols, study goals, training/assistance in data collection, and provide further emotional labels upon request (e.g., attention, boredom). Our prices depend on the number of subjects, recording time, and devices that are being utilized.

We have been offering User Experience for cosmetic companies, perfumes, museum exhibitions, concerts, product packaging, and advertising. Our clients include Akili, Indissoluble, Givaudan, GSK, Fundació La Caixa, Natura Bissé, and Everis. Some of the use cases are presented below.

In the Fundació La Caixa, people walked through a painting exhibition while their physiological signals were captured and translated into emotions through the valence-arousal model. These emotions were then identified for each painting from the average values of all individuals who participated in the experiment so that the exhibition could be re-arranged depending on the emotions that each painting would provoke. An example of the emotional values of the paintings in the whole exhibition is presented in Figure 1.

In the GSK Shopper Science Lab study, participants were browsing toothpaste packages while their physiological signals were captured and translated into valence-arousal values. The result of this service was the User Experience of the packages, which could potentially help advertising companies select the most appropriate packaging depending on the targeting emotions from their clients. An example of these packages in the valence-arousal space is presented in Figure 2.

In Natura Bissé, we constructed a pilot to evaluate the User Experience of a chain of cosmetic therapies. The goal was to evaluate the emotional response to each therapy separately, as well as to the whole chain (i.e., how do people feel at the end of the whole experience?). The different therapies are projected into the valence-arousal space (Figure 3), as well the beginning of the average experience (baseline) and the end of the average experience (close). Apparently, people were feeling neutral at the beginning (middle arousal, middle valence), and serene at the end of the experience (low arousal, high valence).

Last but not least, Givaudan wanted to test the User Experience from different odours. In particular, they provided an invigorating, a relaxing, and a neutral odour and wanted to examine if indeed these odours were provoking the expected emotions from people who experienced them. Indeed, using our ExperienceLab platform, the average emotional values of the participants in the experiment were high arousal for the invigorating odour, low arousal for the relaxing odour, and medium arousal and valence for the neutral odour (control). What we also noticed from this study is that the invigorating odour (invig) provoked low valence (i.e., unpleasantness), whereas the relaxing odour provoked high valence (pleasantness). These results are presented in Figure 4.

If you would like us to help you measure a User Experience problem and solve it for you or if you already have one and need a solution to it, just let us know! We love customizing solutions! Contact us at info@starlab.es!