In my recent post “How good is my Computational Intelligence algorithm for EEG analysis?” we introduced the basic procedure and goal for evaluating the performance of a computational intelligence / machine learning system devoted to the analysis of EEG signals. Please refer to that post for the introductory part. We discussed there the basic framework for evaluating performance. Furthermore we described K-cross-fold-validation (K-CFV) as the mostly used procedure for performance assessment.

Hold-out: precursor of CFV

As we saw in that post K-CFV is based on the splitting of the experimental data set in K different groups or subsets. One defining feature of this procedure is the fact that no element is shared among the groups. This is denoted in mathematic terms as disjoint subsets1. As mentioned we will use one of the subsets for testing, whereas the rest of subsets together are delivered to the system for training. This operation is repeated for each of the K subsets. By the way, these days I have met some people that call the test set the validation set. It is worth mentioning some works use both a test set and a validation set giving different roles to them. So be aware that the nomenclature can be somehow confusing.

One of the precursors of CFV is known as Hold-out. This means we split the data set in two disjoint subsets. One of the subsets will be used for training your adaptive system, e.g. we will use 70% of the data set for training. The other subset is applied for measuring its generalization capability, i.e. for the test or validation set. The characterizing parameter of the hold-out (HO) procedure is the percentage of the data set we will use for training and testing. This basic operation can be repeated K times, whereby the resulting method is known as K-HO. Given that we do not impose any constraints on which samples can get into the training set, there are some samples that can repeatedly appear in the test set over the K iterations. This does not happen in K-CFV, where each sample appears just once in the test set.

The only reason I can imagine why K-HO can be more interesting to conduct than K-CFV is the following. The largest test set you can have in CFV includes 50% of your data set, which happens in 2-CFV. For larger values of K, the percentage of data you devote to assess the generalization capability of the system is smaller. So if you have a system that presents a very good performance as measured through K-CFV, you might be interested in analyzing which is the lowest number of training samples you can have until you detect a change in performance. So in HO you have more flexibility in deciding the percentage of samples to be included in the test set.

Leave-One-Out or you are not lost if you have few data samples

One further alternative to CFV is known as leave-one-out (LOO). This is mostly used when data sets are very small. Given you have so few samples, you use just one of them in the test. The remaining samples are then used for training. You repeat this basic procedure for each sample in your data set. So LOO is a particular case of K-CFV, where K equals the number of samples in the data set. This procedure is also known as jack-knife in some communities.

But there is more in LOO than just an alternative to CFV for small datasets. As Jay Gunkelman commented on my last post on performance evaluation, subject-based LOO can be used to detect outliers in an EEG data set. I had never thought about LOO in this way, but it looks like a very interesting idea. I will try to explain what I mean by subject-based LOO.

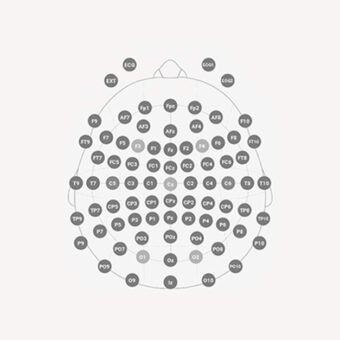

When you analyze EEG you normally acquire a set of signals from different subjects, let’s say a group of healthy controls and a group of epilepsy patients. You might need a classifier to distinguish between EEG from subjects in both groups. So you can use subject-based LOO for evaluating the performance of such a system. In this case you will leave all the EEG data of one subject out of the training, and use it for testing. You will repeat this procedure for each subject in your database. Usually most of the subjects within a group, which you expect to be homogeneous, present a similar performance but if the performance of a reduced subgroup of them differs to a great extent, you should take a closer look at that data. You might eventually infer that they present an anomaly in their EEG that causes this behavior, and that the initial group is not as homogeneous as you thought. If the reduced subgroup is small enough you might have found what are known as outliers.