I have reviewed (last post) some techniques for evaluating the performance of computational intelligence / machine learning systems analyzing EEG signals. I would like to close this series with a post discussing the most advanced technique: the leave-pair-out method. After having discussed K-cross-fold-validation (K-CFV), hold-out (HO), and leave one-out (LOO), I have thought readers would be interested in this methodology.

Basic methodology for leave-pair-out

The leave-pair-out method is related to leave-one-out. However we do not leave one sample out of the training phase as in LOO, but two. As you may remember, in LOO you train your machine learning system with all samples in your data set except one. Then you test your system with the sample which has been left out and you repeat this operation for all samples in your data set.

Leave-pair-out is a technique used when having binary classification problems. As in the case of the LOO methodology it’s a technique recommended for cases where the available data set is small. The difference with LOO is that you leave one sample of each class for testing your system, i.e. you leave both a positive and a negative example out of the training set. As in the LOO you repeat this operation in order for all samples in the data set to be used in the test set. Therefore you will leave out all possible pairs of examples in your data set. To the best of my knowledge this technique was first proposed in 2007 by Cortes and colleagues3.

Main advantage of LPO: it allows training with balanced data sets

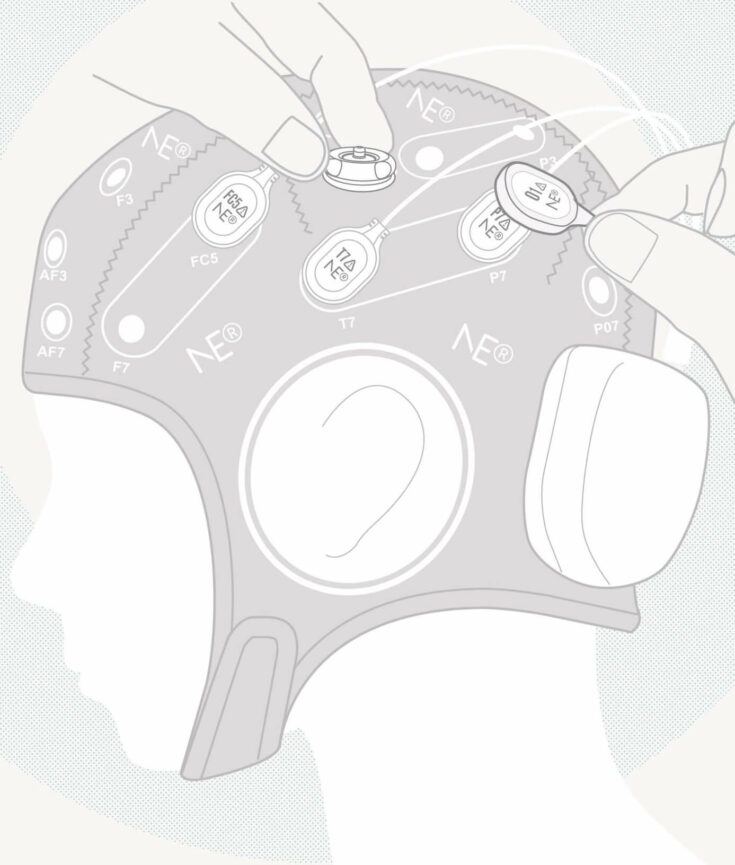

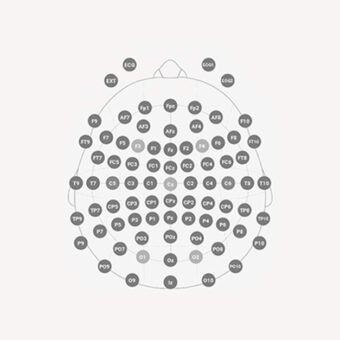

The main advantage of the LPO methodology in binary classification problems is that you do not have to break the balance between your positive and negative class in the training set. What I mean by a balanced data set is one with the same number of examples in the positive and in the negative class. As you may know there are algorithms that are very sensitive to being trained with unbalanced datasets, i.e. datasets with a different number of positive and negative examples. Therefore LPO allows to maintain the balance between both classes in your training set. Let’s imagine you have EEG data of 10 subjects with Parkinson’s Disease and of 10 Healthy Controls. If you use leave-one-out, you have to train you classifier with 10 examples of one class and 9 of the other. By using leave-pair-out, you will always train your system with 9 examples of each group.

An additional advantage of LPO: performance measures without graphical representation

One of the most used performance measures in pattern recognition, specially for diagnostic systems is the so-called Area Under the Curve (AUC). The AUC is normally computed by integrating the Receiver Operating Curve (ROC) with respect to the False Positive Rate. If you are not familiar with these procedures you can start with some basic knowledge in Wikipedia4. As you can read there the computation of the AUC needs a previous computation of the ROC. This can become a problem in leave-one-out and leave-pair-out because of two reasons. First you need an additional procedure to summarize the curves resulting of leaving each example or each pair of examples in order to obtain just a curve for the computation of the global AUC. There is no standard procedure for conducting such a summarization and the result might differ a lot depending on the procedure you follow. Secondly, the global ROC resulting from the procedure might present a strange form.

So one of the advantages of leave-pair-out is the existence of a procedure avoiding the need to represent the ROC for the AUC computation. Hence Airola and colleagues5 have recently proposed a methodology to compute the AUC without having to represent the Receiver Operating Curve. This methodology sums up the number of pairs where the classification score of the positive example is larger than the classification score of the negative class example. The percentage of pairs where this condition fulfills is equal to the AUC. Moreover this percentage presents a statistical interpretation: the probability that you’ll make the right decision if you randomly select a pair of examples in your dataset. I’ll present a comprehensive example. Let’s suppose you have the previously mentioned dataset of EEG signal streams corresponding to 10 subjects with Parkinson and 10 healthy subjects. The AUC as computed with Airola and colleagues’ procedure is an estimation of what is the probability of distinguishing two subjects, one of each group, randomly selected from your subject pool. This probabilistic interpretation of the performance measure is an important feature given the fact that physicians are used to dealing with statistical measurements.