In this post I would like to introduce the concept of BrainPolyphony, a CRG Awards-founded project in which Centre de Regulació Genòmica (CRG), Universitat de Barcelona, Mobilitylab, ASDI and Starlab will explore new ways of communication for patients suffering from severe speech disabilities. Patients with neurodegenerative diseases, such as cerebral palsy, often experience important communication problems. At present the tailor-made devices and protocols that facilitate communication are expensive and neither accurate nor accessible to all patients.

The main goal of BrainPolyphony is to use the sonification of brain signals in real time to develop accessible tools for communication. This alternative communication tool system will allow to overcome the communication difficulties in cerebral palsy patients, which would help neurorehabilitation, and provide a tool for neurofeedback. We truly believe this new communication paradigm will make a huge difference in patients with severe communication problems allowing them new ways of interaction with other people and their environment.

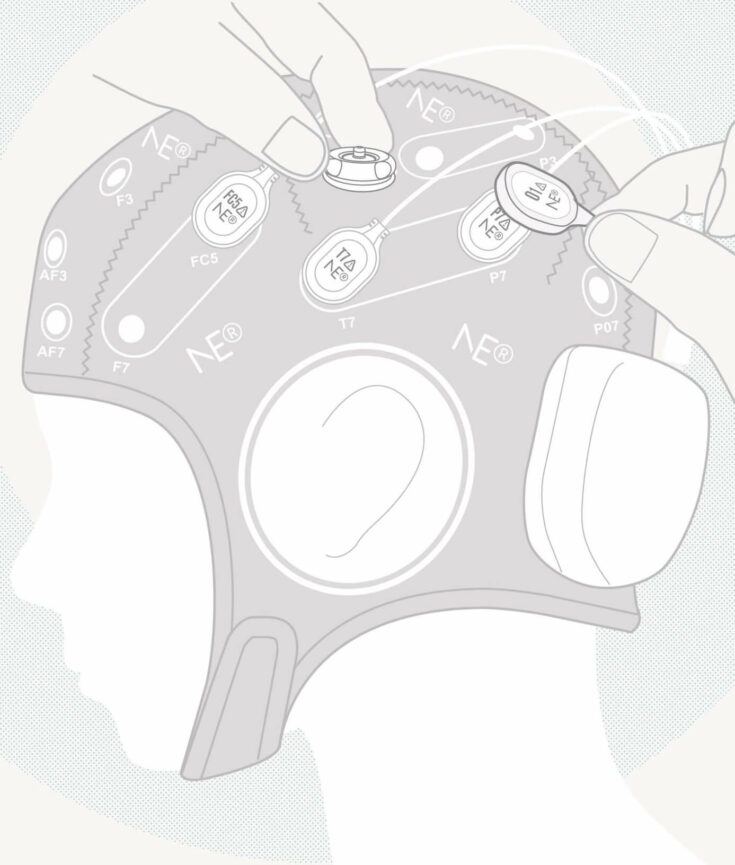

Enobio, a non-invasive, CE Medical, wireless electrophysiology sensor, will be in charge of measuring the electrical brain activity (EEG) from the surface of the patient’s scalp, and the heart activity (ECG) from his wrist, for subsequent sonification. Sonification is the process that will consist of acoustically presenting these EEG and ECG time-series, as well as their characteristic patterns, in real time. The electrophysiological patterns we aim to extract will reflect the patient’s current emotional state.

An EEG/ECG-based system for tracking emotions in real time will be built. The application will be developed using Enobio’s API and based on the valence-arousal 2-dimensional emotional mapping, a representation in which the arousal dimension measures how dynamic the emotional state is, and the valence is a global measure of the positive or negative feeling associated with the state. The system will also continuously monitor the heart rate and heart beats.

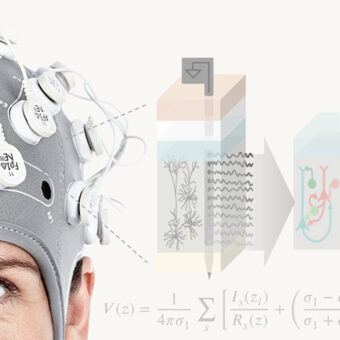

The developed online electrophysiological data analysis platform will stream in real time the EEG\ECG time-series and the calculated emotional levels to a sonification software in charge of transforming them into music. This software will consist of Pure Data based algorithms that will either create music out of the incoming information or modify the parameters of currently played tracks. Just imagine that each time your heart beats a drum is played, the pitch of your favourite song increases as your heart rate goes up, and when the system detects that you are feeling good and relaxed a nice cheerful tune is played. Artistic possibilities are huge for BrainPolyphony composers.

Once the entire system is up and running it will be tested on 8 adult participants diagnosed with different cognitive or motor disabilities. Although the severity of the comorbidities varies in the different patients, all selected participants will present restricted speech or communication capabilities and motor impairments but do not have audition problems. The patients’ acceptability and interaction possibilities will show if the project is successful or not.

The BrainPolyphony team’s motivation is therefore to develop an easy-to-use, affordable, and widely accessible therapeutic and communication platform aimed at improving communication in cerebral palsy patients. The project may result in a new conceptual framework for understanding brain activity, and new communication methods. However, it will also provide a new rehabilitation tool for patients with motor and cognitive impairment.

The project just started – stay tuned if you are interested; will keep you posted of with the project’s news and updates.