In this post I want to talk to you about something quite different to anything else we discussed before: sonification and its combination with EEG. For those who don’t know what sonification is, a good and brief yet powerful definition is the process of transforming, possibly in a bijective way, an information of any type into sounds. One common use of data sonification is: “the process of acoustically presenting a time-data series“. This application intends to take profit of specific characteristics of the human sense of hearing (higher temporal frequency and amplitude resolution) compared to the human sense of sight to attain better data representations. Sonification can be also combined with traditional visualisations to expand the dimensionality of the representation.

Stephen Barrass has been working on sonification for many years. On this webpage you can find many applications of sonification. Also, in the following video he gives an extensive lecture of his work in this field:

Aural City 2009: Prof. Dr. Stephen Barras from aural city on Vimeo.

Another impressive application of sonification is the example of Neil Harbisson (himself). Rather than applying sonification to data, Harbisson applied it to senses. Being colour blind, he developed an schema of colour sonification by assigning a different sound to each colour sensed by a camera. With this, he can ‘hear’ the sounds that the camera senses. Moreover, he plugs the sounds produced directly into his brain through the acoustic system by bone conduction. Therefore, he became a cyborg with an electronic sense for visual capturing. After years of ‘hearing’ colours, his brain adapted to the inputs and he’s now even capable of dreaming in colours.

Another interesting application is to combine EEG with sonificatio, and not only to represent EEG signals through sounds (which makes a lot of sense, due to the high resolutions that sound provides), but also for other interesting paradigms.

Sebastian Mealla works on the Teclepatia project, “Mechanisms of unconscious communication in collaborative work environments”. He develop a BCI interface to contribute to the collaborative instrument Reactable. EEG and ECG signals are transformed into parameters for different sound synthesisers and controllers of the table.

b-Reactable from Sebastián Mealla on Vimeo.

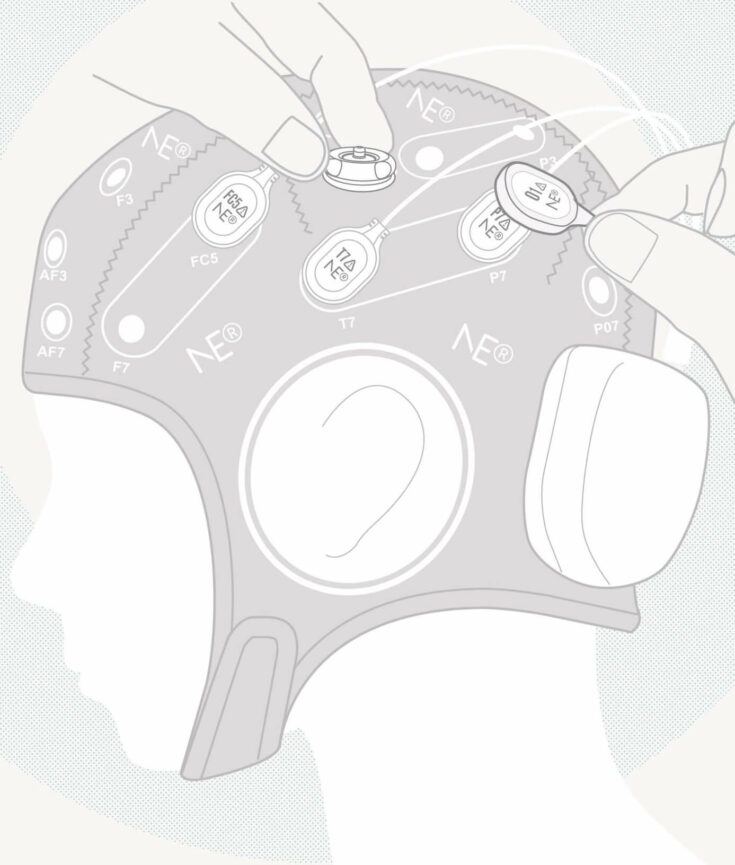

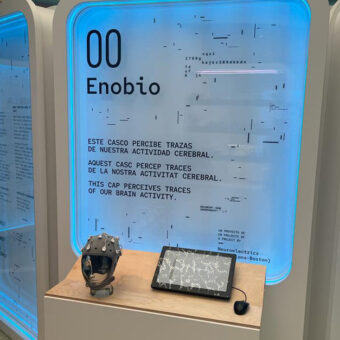

EEG sonification is also among the different approximations that Stephen Barras took. He worked on several experiments in which music performers wear enobio and capture EEG, ECG and EOG signals, and those signals are then mapped into sounds that are reproduced into the play in real time.

Another application of sonification for music generation is the work of Eduardo Miranda. He developed a system to use EEG signals to “steer generative rules to compose and perform music“. Different EEG features, such as the activation of different frequency bands and others, are mapped into the generative rules and also control tempo and loudness of the performance.

Many thanks to @smealla and @capitancambio for their precious inputs for this post!