After the well known science-fiction writer Isaac Asimov wrote his fourth book of the foundation series “Foundation’s Edge”, he won the Hugo Award, the Locus Award and was nominated for the Nebula Award all in 1983. These awards and nominations were not only recognition for Asimov’s work but also for his momentous career, having written an astounding 262 books during his 44 years of writing…quite a thing, isn’t it?

I must confess that I read this book many years ago and have forgotten many details of this story, except for one in particular that has always stuck in my mind. The human machine interface (HMI) that allowed Golan Trevize (officer of the first foundation) to pilot its spaceship in search of “Earth” with Dr. Pelorat was absolutely outstanding – and sweet candy for neuro-adaptive technologists and physiology-computing innovators. In “Foundations Edge”, Golan Trevize would pilot the spaceship just by sitting in its operational desk and extending his arms. Then, the HMI would wirelessly connect to Golan’s mind and body. By doing this, the HMI would be able to interpret all of Golan’s electro-cortical and physiological activities, carrying out all flight control operations.

The spaceship and the pilot would couple into one single operational unit like having a shared consciousness, the spaceship would be able to know and carry out the pilot’s orders and intentions, constantly adapting its performance to the pilot’s desired actions. From the pilot’s perspective, Golan has informed knowledge on how to command the spaceship intuitively. The HMI of the spaceship would carry out all the computations needed for flying the ship and would know when to take control in dangerous situations. The HMI was completely aware of and had access to Mr. Golan Trevize’s environment, state of mind, and behavioral state.

Well, after this long introduction, I must say that I am not here to talk about Mr. Asimov’s novel, but I could not think of a better example of an HMI to illustrate what physiological computing is about. For reference, the HMI utilized physiological computing, which measures and records all possible sources of physiological data as system input. These sources include cell-brain electrical impulses, muscle electrical impulses, heart rate, galvanic skin response (GSR), gesture and behavioral information, and so on. In scientific terms, these are collected through techniques known as electroencephalography (EEG), electromyography (EMG), and electrocardiography (ECG). These techniques all collect electrical activity from the body to visualize a continuous and updated emotional, cognitive, and physical state of the user. This allows the system to respond and adapt dynamically according to the user. For a review of the current state of the art in physical computing see the references below [13,14].

Physiological computing is a powerful tool but there are still improvements to be made. The neuroadaptive technology can be integrated with other fields, including brain computer interfaces (BCI), affective computing, and neurofeedback in order to add further physiological and behavioral data to the decision-making loop. Playing off this idea of integration, there are countless examples of modern neuroadaptive technology:

- Providing means of communication for people suffering from paralysis with spelling devices, which utilize EEG recordings. This kind of BCI application has greatly improved since the first spelling device in 1988, which used the P300 component from even related potentials (ERPs) and could transfer with a rate of 12 bits/min or 2.3 characters per min [1]. Now in 2019, spelling devices can transfer with a rate of 175 bits/min or 35 error-free letters per minute [2]

- Controlling external devices such as robotic wheel chairs [3], screen cursors [4] and other BCI devices [5, 15]

- Recognizing intention of movement [6], which improves previous BCI applications using motor imagery [7]

- Measuring cognitive workload and vigilance through EEG and electrooculography, or EOG [8,9]

- Characterizing the emotional state of a person via affective computing [10]

Surprisingly, all of the examples above use EEG and EOG data. By adding additional physiological input to these modern neuroadaptive technologies, physiological computing lays out new possibilities for exploring user insight and advancing adjacent fields such as machine learning. By improving how our decision-making loop and data are interpreted, we will be able to create HMI applications that understand and can adapt to the user in order to provide a more seamless experience.

Thus, the future of physiological computing offers new challenges, and applications will depend greatly on the following advances:

- Improving our wearables technology for data acquisition and processing.

- Increasing our knowledge and understanding of the physiological data.

- Heightening the capabilities of machine learning. Improving our machine learning models with more heterogeneous physiological data will allow us to make more accurate predictions of user actions and intentions.

Therefore, it is not surprising to see new applications of physiological computing in the aeronautic and automobile industry, where it is required to have both machines as well as the user (driver or pilot). In these two fields, we can find some of the latest developments in physiological computing. A good example is the addition of neural and physiological activity to autonomous driving control operations. In this area, the autonomous cars learn and draw a continuous cognitive state from the driver to know when to take control and avoid collisions. They can also improve the driving experience by automatically adjusting the autonomy of the car as well as its ambient environment.

Regarding aeronautic applications of physiological computing, we are still quite far from seeing HMI applications like the one that Asimov’s Mr. Trevize used in the “Foundations Egde”. Nonetheless, the aeronautics industry is pushing the development of physiological computing applications. This is not surprising if we take into account the amount of mental workload that a pilot undergoes when performing various piloting tasks: controlling the aircraft trajectory, monitoring the flight parameters, performing checklists, communicating with air traffic controllers, identifying potential threats (collision, failures and bad weather conditions) and adapting the flight plan, etc.

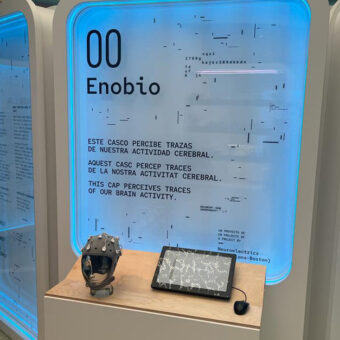

In this scenario, physiological computing is the way to go forward and despite being in such an early stage of progress compared to Asimov’s design, we can find ongoing pilot research in the literature that monitors mental state of awareness as well as mental workload [11,12]. This last work on the monitorization of mental workload was actually carried out using one of our Neuroelectrics devices to measure EEG, an 8-channel Enobio with dry electrodes. For another example of pilots eeg recording, please check our previous post “eeg on the air”.

Taking into account Abraham Lincoln’s quote, “the best way to predict the future is to create it”, I think a good starting point in developing technology of the future is to imagine the future. Can you think of a new application utilizing the benefits of physiological computing? If you do, please leave your comments below.

References

[1 ] Farwell, L.A.; Donchin, E. Talking off the top of your head: toward a mental prosthesis utilizing event-related brain potentials. Electroencephalography Clin. Neurophys. 1988, 70, 510–523.

[2] Sebastian Nagela, Martin Spuler. World’s Fastest Brain-Computer Interface: Combining EEG2Code with Deep Learning.

[3] Becedas J. Brain-machine interfaces: basis and advances. IEEE Transactions on Systems, Man and Cybernetics Part C: Applications and Reviews. 2012;42(6):825–836

[4] Graimann B., Allison B. Z., Pfurtscheller G. Brain-Computer Interfaces: Revolutionizing Human-Computer Interaction. Berlin, Germany: Springer; 2010.

[5] Thorsten O. Zander, Laurens R. Krol, Niels P. Birbaumer, and Klaus Gramann. Neuroadaptive technology enables implicit cursor control based on medial prefrontal cortex activity

[6] Berenice Gudiño-Mendoza. Detecting the Intention to Move Upper Limbs from Electroencephalographic Brain Signals

[7] Pfurtscheller G, Neuper C. Motor imagery activates primary sensorimotor area in humans. Neurosci Lett. 1997 Dec 19; 239(2-3):65-8.

[8] Spencer C. Castro, David L. Strayer, Dora Matzke Andrew Heathcote. Cognitive Workload Measurement and Modeling Under Divided Attention

[9] Wei-Long Zheng, Bao-Liang Lu A Multimodal Approach to Estimating Vigilance Using EEG and Forehead EOG

[10] Lachezar Bozhkova, Petia Georgievab, Isabel Santo, Ana Pereira, Carlos Silva. EEG-based Subject Independent Affective Computing Models.

[11] Dehais, F.; Behrend, J.; Peysakhovich, V.; Causse, M.; Wickens, C.D. Pilot flying and pilot monitoring’s aircraft state awareness during go-around execution in aviation: A behavioral and eye tracking study. Int. J.Aerosp. Psychol. 2017,

[12] Frédéric Dehais,Alban Duprès, Sarah Blum, Nicolas Drougard, Sébastien Scannella, Raphaëlle N. Roy and Fabien Lotte. Monitoring Pilot’s Mental Workload Using ERPs and Spectral Power with a Six-Dry-Electrode EEG System in Real Flight Conditions

[13] Fairclough, S.H. & Gilleade, K. (Eds). 2014. Advances In Physiological Computing. Springer.

[14] Fairclough, S. H. 2009. Fundamentals of physiological computing. Interacting with Computers, 21, 133-145

[15] Abiri R, Borhani S, Sellers EW, Jiang Y, Zhao X. A comprehensive review of EEG-based brain-computer interface paradigms.